Read on PR Newswire. Strategic Expansion Includes Larger Headquarters in Ann Arbor and Global Offices toBolster Company’s Growth Trajectory in 2024 and Beyond …

Autonomous Mobility Insights: Navigating Industrial Transitions in 2024

By now, it’s clear that the COVID-19 pandemic had an unprecedented effect on all areas of our lives. Remote work …

Ask the Experts: Cybersecurity Q&A

“Safety first” is important for a production environment, and it holds especially true in the risky world of cybersecurity. How …

New Eagle Appoints New CEO to Lead Company’s Next Stage of Growth

Industry Veteran Chris Baker Brings More Than 20 Years of Experience in Embedded Software and Control Systems in High-Growth Engineering …

Choosing the Right Hydrogen Fuel Cell Controller

As the automotive industry continues to grow beyond the traditional internal combustion engine (ICE), new vehicle-powering technologies are taking their …

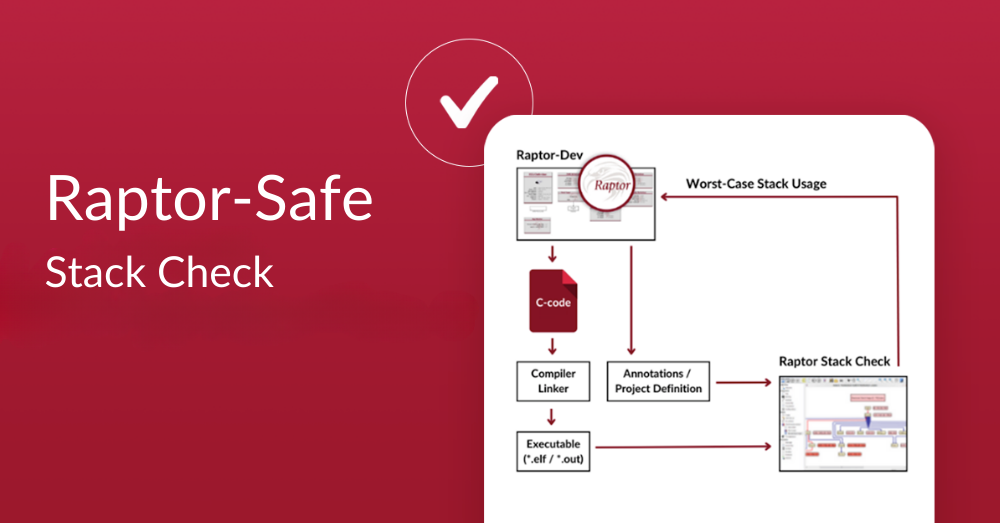

Top 3 Benefits of New Eagle’s Latest Software: Raptor-Safe Stack Check

A second set of eyes never goes amiss. Whether you’re asking your partner to read over a job application before …

New Eagle Sponsors the Indy Autonomous Challenge at CES 2024

The most exciting event of the year is right around the corner. But you won’t need your best tux or …

Ampere EV Transforms Vehicles With Atom Drive Systems

What’s your dream car? James Bond’s Aston Martin? Herbie the VW Beetle? Doc Brown’s time-traveling DeLorean? Classic cars only have …

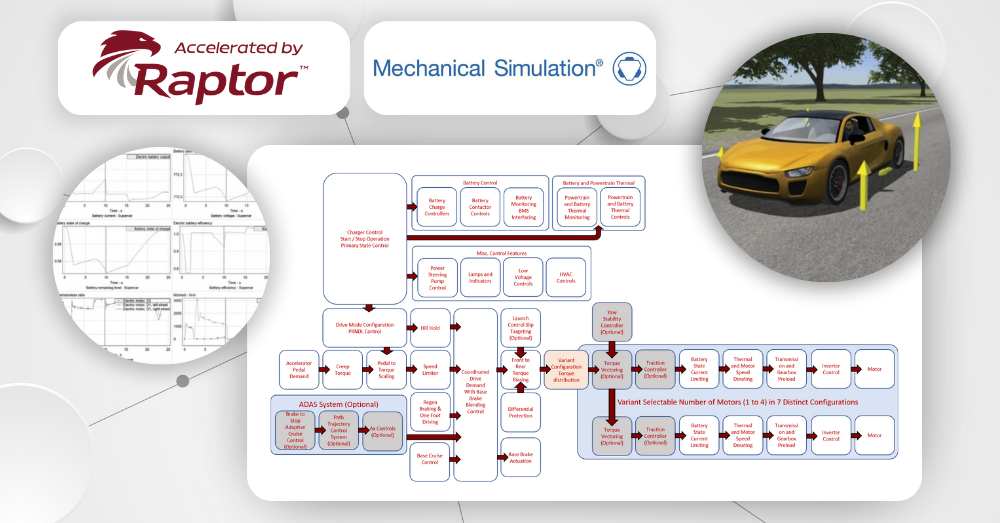

Speed Up Your EV Development With Raptor and CarSim’s Cutting-Edge Integration

Some things go better together. They’re great on their own – but when you put them together…get ready for magic! …

Meet the Mitsubishi AC Compressor, the Perfect High Volume AC Solution for EVs

At New Eagle, we know how important development time is for EV projects. And that worldwide supply chain issues have …